Explore how we've helped startups and enterprises build scalable solutions that handle millions of users and transactions

Semantic Autonomy for Airport Logistics

VLM-based perception pipeline running on NVIDIA Orin (45W) to resolve "Ghost Braking" in autonomous baggage fleets.

The Challenge

A logistics client faced a "Ghost Braking" crisis. Legacy perception (YOLO+LiDAR) failed to distinguish between hazardous obstacles and harmless environmental noise (exhaust steam, shadows) on the tarmac, causing unacceptable downtime.

Our Solution

Architected a "Hybrid Decoupled Brain" using a Vision-Language Model (VLM). Used CLIP as a frozen backbone and a Q-Former to compress visual tokens. Implemented a LoRA-tuned Llama-3 model for Chain-of-Thought reasoning. Optimized for the edge using 4-bit AWQ quantization and Taylor-Expansion pruning to fit within 45W thermal limits.

Technologies Used

Interested in a similar solution?

Start a Conversation→Autonomous Perception Stack

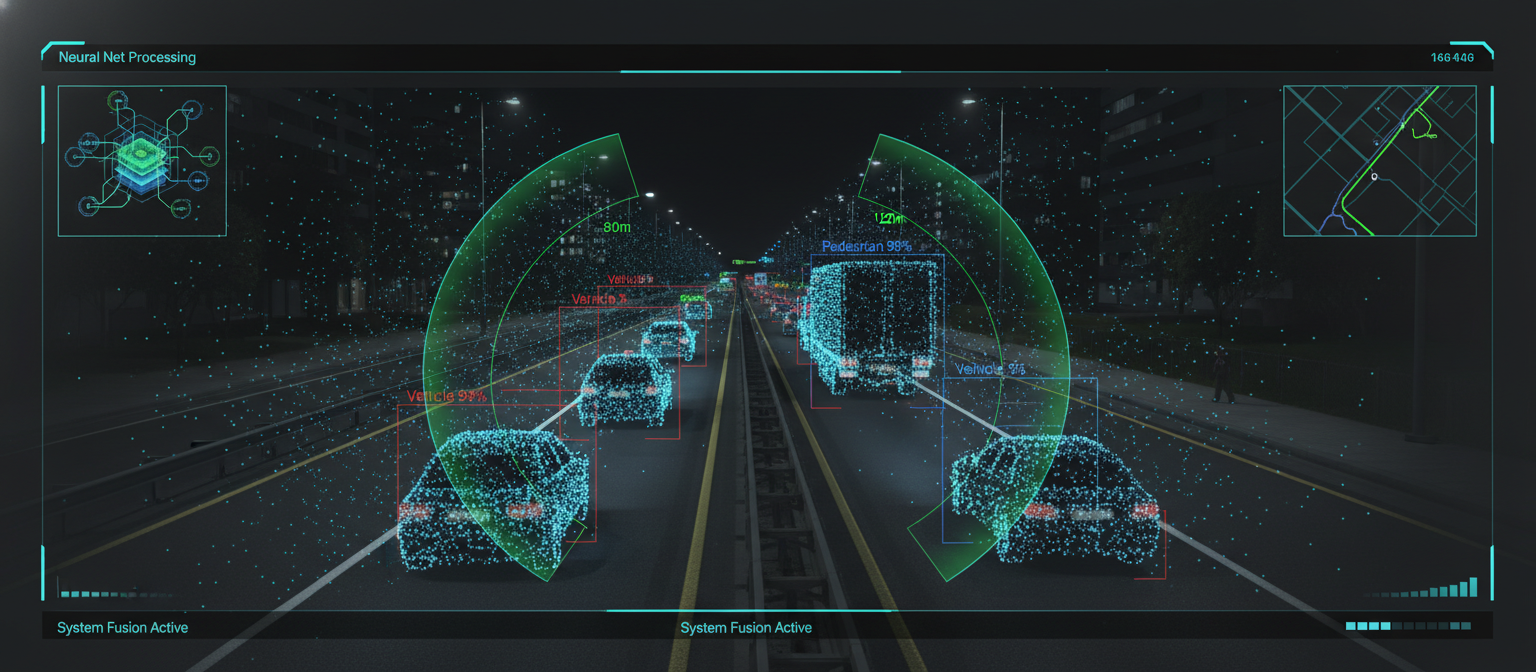

BEV Transformer with multi-sensor fusion for a Tier-1 automotive client, deployed to a production test fleet

The Challenge

A Tier-1 automotive client required a next-generation perception pipeline capable of fusing multi-camera inputs into a unified spatial representation for real-time object detection and path planning. Their existing stack suffered from high inference latency and could not produce coherent 3D scene understanding from 2D camera feeds in complex urban scenarios.

Our Solution

Architected a BEV (Bird's Eye View) Transformer performing end-to-end learned sensor fusion across multi-camera inputs. The pipeline produces a unified spatial representation for simultaneous object detection and path planning. Implemented cross-attention mechanisms for robust spatial reasoning across viewpoints. Optimized the full inference graph with TensorRT INT8 quantization for edge deployment on production vehicle hardware.

Technologies Used

Interested in a similar solution?

Start a Conversation→Sovereign Enterprise Neural Search

Local-first RAG architecture enforcing full data sovereignty across 50k+ documents for a regulated enterprise client

The Challenge

A regulated enterprise client needed a private, multi-tenant knowledge system to query 50,000+ internal documents with zero data leaving their infrastructure. Every existing SaaS solution posed data leakage risks incompatible with their compliance posture, and manual document retrieval was consuming thousands of hours across teams.

Our Solution

Built a sovereign, multi-tenant neural search engine enforcing full data sovereignty via a local-first RAG architecture. Orchestrated autonomous reasoning agents with multi-hop retrieval over fine-tuned embeddings and a vector store running entirely on-premises. Implemented strict tenant isolation at the embedding, retrieval, and generation layers to eliminate data leakage risks across the entire pipeline.

Technologies Used

Interested in a similar solution?

Start a Conversation→MEV High-Frequency Arbitrage Engine

Rust-based mempool decoder and MEV detection engine with sub-100ms end-to-end arbitrage execution

The Challenge

A DeFi-focused client needed a high-frequency engine capable of monitoring the Ethereum mempool in real-time to detect Maximal Extractable Value (MEV) opportunities. Existing tools introduced unacceptable latency, consistently missing time-critical arbitrage windows measured in single-digit milliseconds.

Our Solution

Engineered a high-frequency MEV arbitrage engine in Rust for maximum throughput. Built a custom mempool decoder that parses pending transactions, scores opportunities against a profitability model, and triggers real-time arbitrage execution. The Rust-based pipeline handles transaction decoding, opportunity classification, and execution bundling with sub-100ms end-to-end latency.

Technologies Used

Interested in a similar solution?

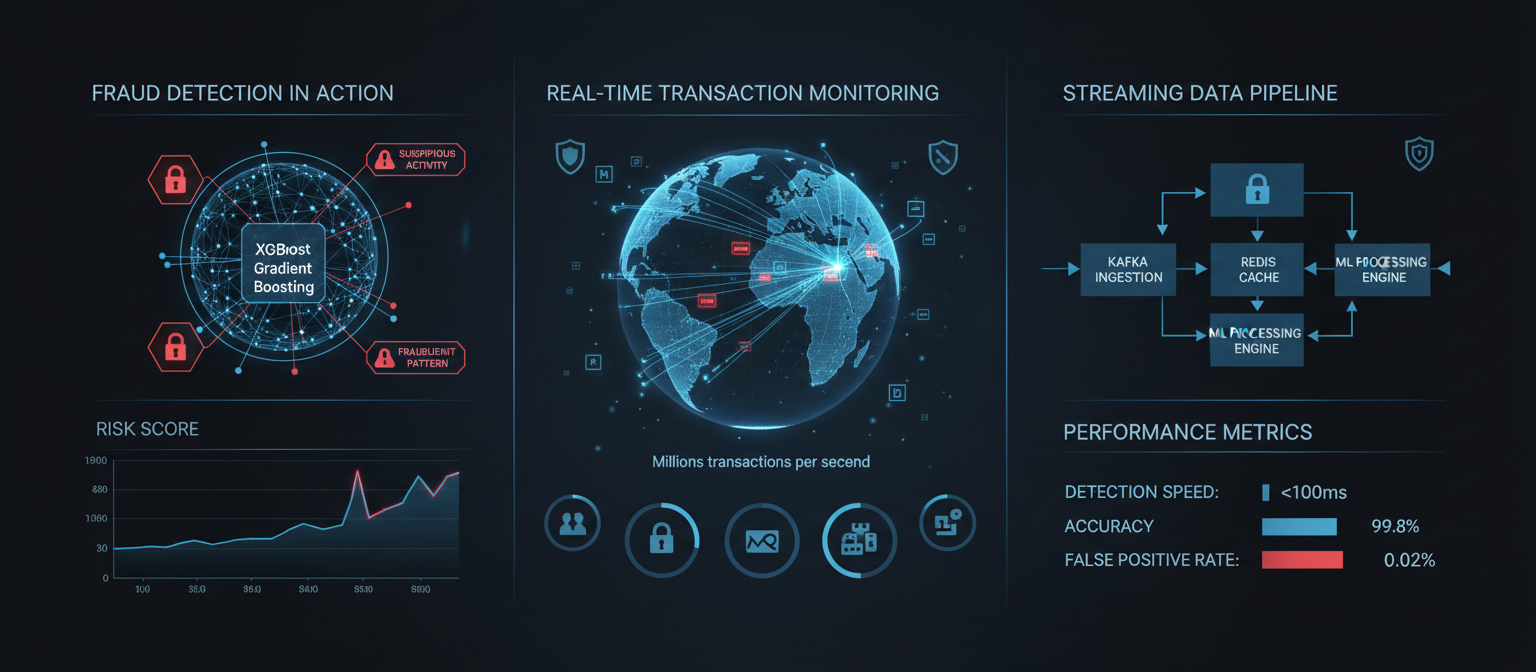

Start a Conversation→Real-Time Fraud Detection Pipeline

Streaming ML inference engine processing millions of transactions with sub-100ms latency

The Challenge

A fintech platform processing $2M+ daily transactions was losing ~$120K monthly to fraudulent activities. Their rule-based detection had a 40% false positive rate, blocking legitimate users and causing churn. They needed a real-time ML solution that could adapt to evolving fraud patterns while minimizing false positives.

Our Solution

Designed a real-time fraud detection pipeline using gradient boosting models (XGBoost) with a feature engineering layer processing 200+ signals including transaction patterns, device fingerprinting, and behavioral biometrics. Built a streaming inference architecture with Kafka and Redis for sub-100ms scoring. Implemented an online learning pipeline for continuous model updates based on fraud analyst feedback. Created an explainability layer showing feature attributions for flagged transactions.

Technologies Used

Interested in a similar solution?

Start a Conversation→AI-Powered Medical Imaging Analysis

Attention-based deep learning pipeline for radiology image triage and anomaly detection

The Challenge

A radiology group was facing a backlog of 2-3 days for CT and MRI scan analysis, with radiologists spending 15-20 minutes per scan. They needed an AI system to pre-screen scans, flag abnormalities, and prioritize urgent cases while integrating with their existing PACS system and meeting HIPAA compliance requirements.

Our Solution

Developed custom deep learning models using PyTorch for detecting abnormalities in CT and MRI scans. Built a DICOM preprocessing pipeline normalizing across different scanner types. Implemented attention-based neural network architecture highlighting regions of interest for radiologists. Created a secure PACS integration API. Deployed on HIPAA-compliant infrastructure with end-to-end encryption. Built a radiologist review interface showing AI confidence scores and highlighted regions.

Technologies Used

Interested in a similar solution?

Start a Conversation→Predictive Supply Chain Optimization Engine

ML-based demand forecasting with linear programming optimization across 15 distribution centers

The Challenge

A manufacturing company with 15 distribution centers was experiencing both stockouts and overstock, tying up $3M in excess inventory. Their legacy system used fixed reorder points that could not adapt to seasonal demand, promotions, or supply chain disruptions. Manual inventory decisions by regional managers led to inconsistent results.

Our Solution

Built an ML-based demand forecasting system using time series models (Prophet, LSTM) predicting demand at SKU-warehouse level considering seasonality, promotions, weather, and economic indicators. Developed an optimization engine using linear programming to calculate optimal reorder points and quantities. Created a real-time dashboard with inventory levels, predicted stockouts, and recommended actions. Integrated with their ERP system for automated purchase order generation.

Technologies Used

Interested in a similar solution?

Start a Conversation→Gas-Optimized NFT Marketplace on Polygon

ERC-721A lazy-minting marketplace with virtual scrolling for 10K+ item collections

The Challenge

A digital art platform needed an NFT marketplace but Ethereum gas fees ($50-200 per mint) made it economically unfeasible. They required an affordable, fast solution with good liquidity. The previous prototype took 45 seconds to load listings and had no mobile optimization.

Our Solution

Architected the marketplace on Polygon with ERC-721A gas-optimized smart contracts reducing batch mint costs by 60%. Implemented lazy minting so artists create NFTs without upfront gas costs. Built IPFS integration for decentralized metadata storage. Developed a high-performance frontend with virtual scrolling for 10K+ item collections. Integrated MetaMask, WalletConnect, and credit card payments via Crossmint.

Technologies Used

Interested in a similar solution?

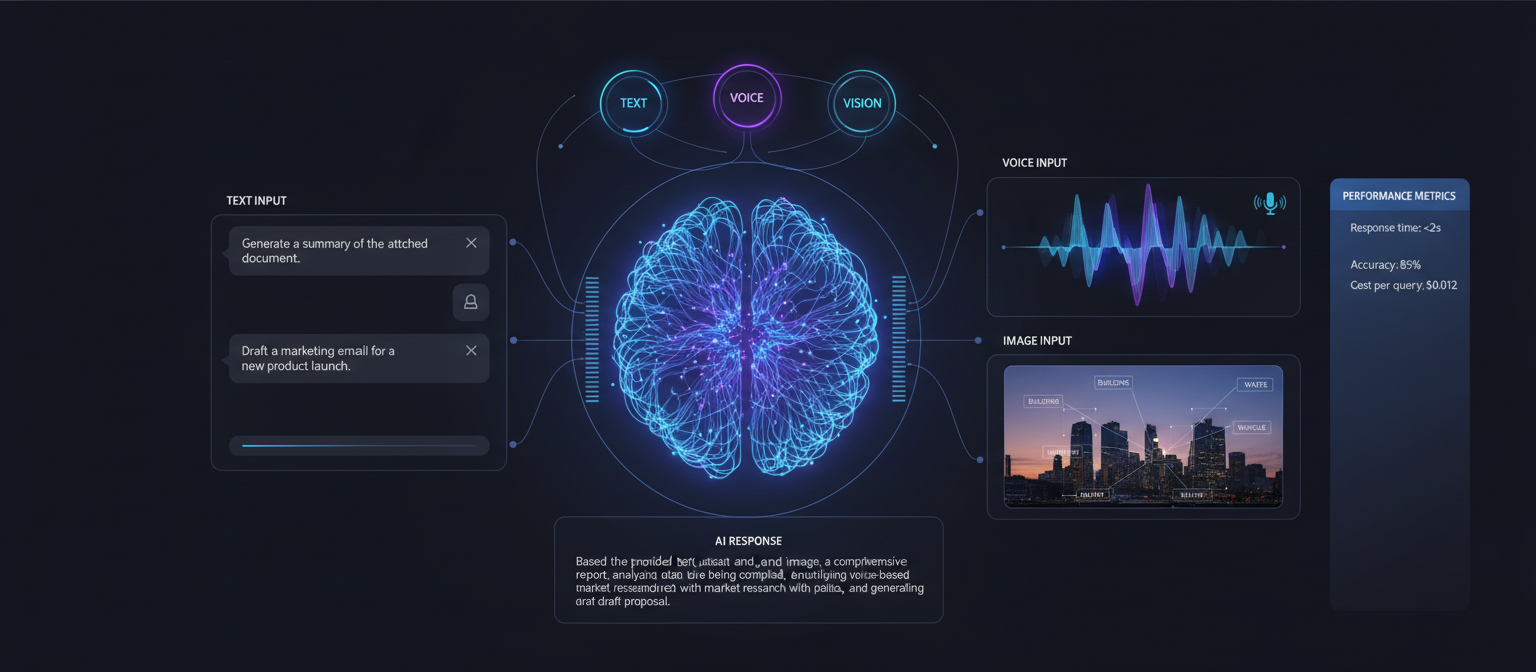

Start a Conversation→Multilingual Conversational AI Engine

Fine-tuned LLM with semantic retrieval handling 10K+ monthly conversations across 3 languages

The Challenge

An e-commerce platform with 50K monthly visitors was spending $25K/month on customer service agents handling repetitive queries. Human agents were overwhelmed during peak hours, leading to 2-4 hour response times and significant customer churn.

Our Solution

Built a conversational AI engine using a fine-tuned LLM trained on the product catalog, FAQ database, and 2 years of customer service transcripts. Integrated real-time order status lookup via API. Implemented semantic search over product specifications and return policies. Added multilingual support (English, Spanish, French). Created intelligent escalation logic routing complex issues to human agents with full conversation context.

Technologies Used

Interested in a similar solution?

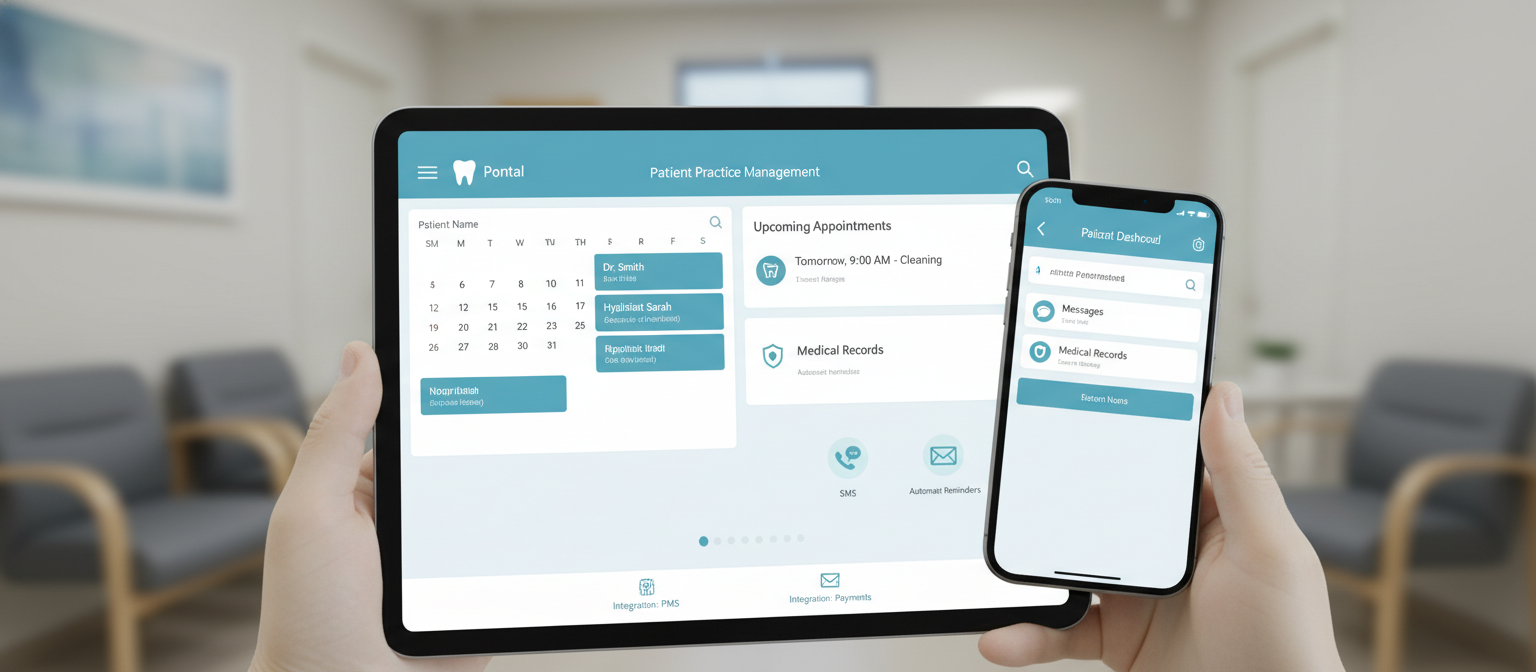

Start a Conversation→HIPAA-Compliant Distributed Scheduling Architecture

Event-driven scheduling engine with real-time slot locking across geographically distributed nodes

The Challenge

A multi-location healthcare provider faced critical data synchronization consistency issues between HL7 FHIR legacy systems and modern cloud interfaces. Concurrent booking requests across geographic nodes caused race conditions, double-bookings, and HIPAA audit failures. Their monolithic scheduling system could not scale without sacrificing data integrity.

Our Solution

Engineered an event-driven scheduling architecture using WebSockets for real-time slot locking to prevent race conditions across multiple geographic nodes. Implemented optimistic concurrency control with conflict resolution at the database layer. Built a FHIR-compliant integration bridge synchronizing state between legacy Practice Management Systems and the new cloud interface. Deployed on HIPAA-compliant infrastructure with end-to-end encryption, audit logging, and role-based access control.

Technologies Used

Interested in a similar solution?

Start a Conversation→Real-Time Inventory Synchronization Engine

Optimistic UI with server-side queueing for high-concurrency order bursts and edge-cached APIs

The Challenge

A retail platform experienced critical latency issues in inventory state management, leading to concurrency errors during high-traffic periods. Simultaneous purchase requests for the same SKU caused overselling, and the lack of real-time POS synchronization created persistent inventory drift between online and physical channels.

Our Solution

Implemented an optimistic UI update pattern with a server-side queueing system to handle high-concurrency order bursts. Built real-time inventory synchronization between the online platform and their POS system using webhooks and event sourcing. Integrated edge-cached APIs for sub-50ms read latency on product and inventory data. Deployed a conflict resolution layer ensuring eventual consistency across all sales channels.

Technologies Used

Interested in a similar solution?

Start a Conversation→High-Throughput NLP Data Pipeline

Kafka streaming pipeline with Elasticsearch indexing processing 50K+ unstructured documents daily

The Challenge

A client monitoring brand reputation across 500+ sources faced massive ingestion challenges with unstructured data streams arriving in varying schemas from Twitter, Reddit, news sites, and RSS feeds. Their manual monitoring process missed time-sensitive events and could not quantify sentiment at scale.

Our Solution

Built a Kafka streaming pipeline ingesting data from 500+ sources and feeding into an Elasticsearch cluster for real-time sentiment indexing. Implemented NLP sentiment analysis using fine-tuned BERT models for classification. Created keyword and entity extraction to surface trending topics and influencers. Built an automated alert system for negative sentiment spikes via Slack and email. Developed an analytics dashboard with sentiment trends, competitor comparisons, and exportable reports.

Technologies Used

Interested in a similar solution?

Start a Conversation→Ready to Create YourSuccess Story?

Join the companies that have transformed their operations with our custom software solutions