Executive Summary

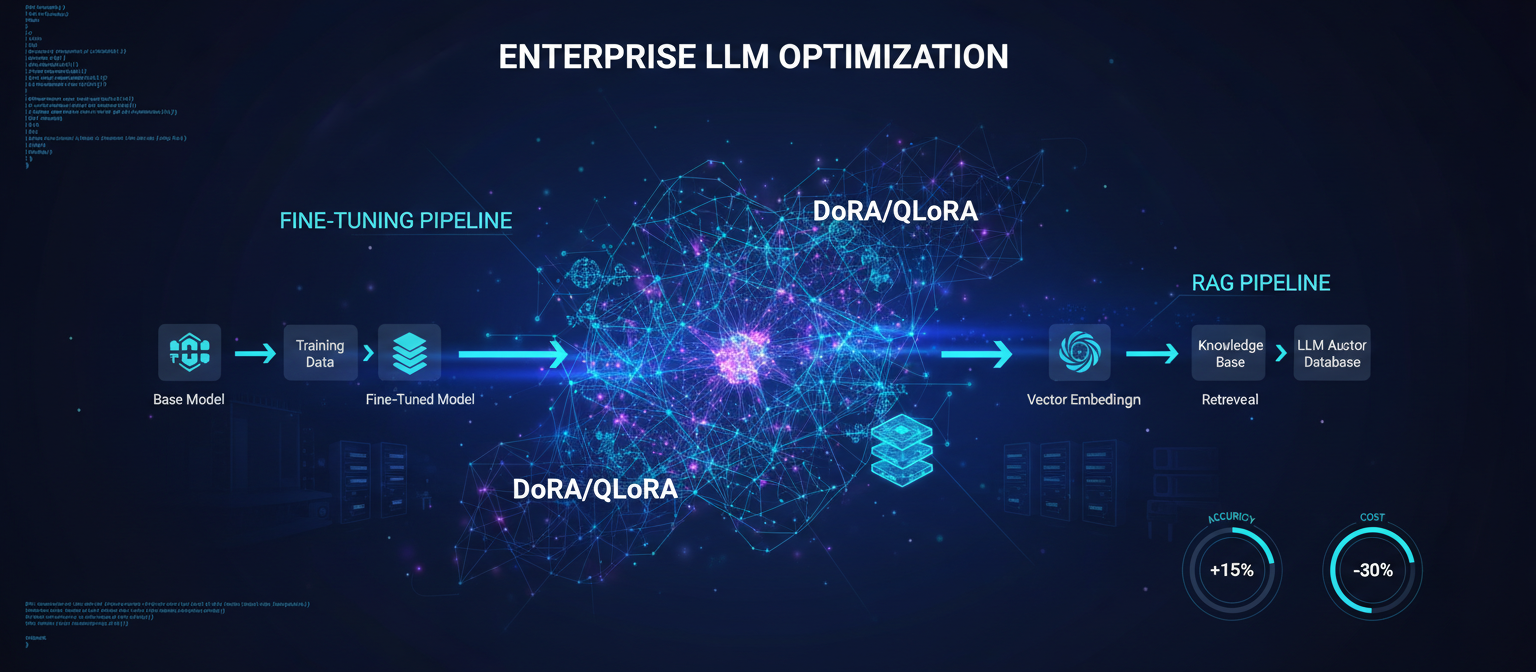

In 2025, fine-tuning large language models (LLMs) and implementing Retrieval-Augmented Generation (RAG) have evolved from experimental techniques into core capabilities for enterprises seeking to build customized AI solutions. When executed properly, these approaches enable domain-specific accuracy, brand-aligned responses, and enhanced efficiency in areas like customer service, legal analysis, and financial forecasting.

However, poor implementation can lead to wasted resources, compliance violations, or suboptimal performance. This comprehensive guide synthesizes the latest industry insights, research findings, and real-world deployment patterns to provide a complete roadmap for enterprise AI teams.

💡 What You'll Learn

We'll cover strategic decision-making, data engineering, advanced fine-tuning techniques, RAG implementation, safety considerations, evaluation frameworks, and production deployment - all with concrete implementation details and 2025 best practices.

The Strategic Foundation: Understanding the Adaptation Spectrum

Why "RAG vs Fine-Tuning" is an Outdated Question

The binary choice between "RAG" and "Fine-Tuning" is fundamentally outdated in 2025. Modern enterprises operate on a spectrum of adaptation techniques, each optimized for different use cases and constraints. Before committing to any training approach, you must evaluate the full range of options:

- • Prompt Engineering & System Design

- • Prompt/Context Caching

- • Long-Context Inference

- • RAG (Retrieval-Augmented Generation)

- • PEFT Fine-Tuning (LoRA/QLoRA/DoRA)

- • Continued Pre-training

- • Knowledge Distillation

The goal is to minimize training costs while maximizing leverage from these primitives, only investing in heavier techniques when absolutely necessary.

The 2025 Decision Matrix: A Comprehensive Framework

Enterprise AI Technique Selection Matrix

| Technique | Best For | Cost Profile |

|---|---|---|

| Prompt/Context Caching | Static prefixes: policies, style guides, API schemas | ~90% reduction after first call |

| Long-Context Inference | Large contracts, multi-repo codebases, multi-month logs | Pay-per-token; scales with context |

| RAG (Retrieval) | Fast-changing data: tickets, metrics, logs, news | $0.01-0.05 per query |

| PEFT Fine-Tuning | Behavioral changes: tone, format, schema adherence | $500-$2,000 one-time for 70B LoRA |

| Continued Pre-training | New domains: fictional languages, domain ontologies | $10,000+ for large-scale runs |

| Knowledge Distillation | Cost reduction: 8B models achieving 90-95% of 70B | 25x reduction in inference costs |

The Strategic Decision Workflow

📋 Simple Rule

- • If your problem is "the model doesn't know X" → Use RAG, long context, or caching

- • If your problem is "the model doesn't behave like Y" → Use PEFT fine-tuning

- • If your problem is "the model fundamentally doesn't speak this domain/language" → Consider continued pre-training (last resort)

Prototype with Base Capabilities

Start with system prompt + few-shot examples. Test with long context if needed (Gemini 1.5, Claude 3.x). Measure: Is the model capable in principle?

Add RAG for Freshness

Stand up a simple vector index (LanceDB or Weaviate) on key documents. Implement retrieval + answer synthesis. Evaluate: Test with a few dozen real user questions.

Layer Prompt Caching

Pin static "policy + examples" prefix (Claude/Gemini/Bedrock). Cache per-tenant or per-workflow. Measure: Token savings and latency improvements.

Consider Fine-Tuning Only When Necessary

When you see systematic, repeatable behavioral errors. Examples: "never stays in JSON schema", "can't reason over proprietary DSL syntax" despite careful prompting.

Enterprise Scenario Recommendations

Strategy by Scenario

| Scenario | Recommended Strategy | Rationale |

|---|---|---|

| General tasks with light customization | Prompt engineering + RAG | Fast, cost-effective |

| Moderate domain expertise (finance basics) | PEFT (LoRA) on Llama 3.1 70B | 80-90% of outcomes achievable |

| High-stakes domains (healthcare, legal) | Full fine-tuning + safety layers | Maximum accuracy needed |

| Ultra-sensitive (regulated industries) | On-premises fine-tuning | Compliance and data sovereignty |

Fine-Tuning Best Practices: From Strategy to Implementation

Strategically Select Your Base Model and Fine-Tuning Approach

Avoid rushing into fine-tuning. Many enterprise needs can be met through prompt engineering combined with RAG, which is faster and less resource-intensive. Only proceed if you require deep domain adaptation or strict behavioral alignment.

Model Selection Criteria

- • Closed Models (GPT-4o, Claude 3.5 Sonnet): Best for rapid prototyping, high-quality outputs, but limited customization

- • Open Models (Llama 3.1, Mistral): Full control, on-premises deployment, but require more engineering

- • 8B models: Good for specific tasks, cost-effective inference

- • 70B models: Better reasoning, more general capabilities

- • 400B+ models: Mostly used as teachers for distillation, not direct inference

Prioritize Data Quality as the Foundation

Data determines fine-tuning success - garbage in leads to garbage out. Enterprises must treat datasets as strategic assets, ensuring compliance and relevance.

Volume and Quality Thresholds

- • Aim for 5,000-50,000 high-quality examples

- • Focus on diversity to cover edge cases and multi-turn interactions

- • Quality > quantity: 500-1,000 excellent examples often outperform 10,000 mediocre ones

Synthetic Data Integration

📋 Data Composition Recipe

- • 70% human-curated demonstrations (from internal logs or SOPs)

- • 20% synthetic (evolved instructions via Evol-Instruct)

- • 10% adversarial examples for robustness

Compliance Measures

- • PII Redaction: Implement with tools like Presidio or Microsoft PIIDetect

- • Licensing Audits: Ensure all training data is properly licensed

- • Version Control: Use DVC or LakeFS for reproducibility

- • Data Residency: Maintain separate raw (locked down) and sanitized (for training) variants

Adopt Parameter-Efficient Fine-Tuning (PEFT) as Standard

Full fine-tuning is resource-heavy and often unnecessary. In 2025, PEFT methods dominate for their efficiency.

Preferred Technique: QLoRA (4-bit Quantization)

- •

rank=64-128(higher for complex reasoning, lower for simple classification) - •

alpha=16-32(usually r/2 or r) - •

dropout=0.05 - • Target all linear layers (q_proj, k_proj, v_proj, o_proj, gate_proj, up_proj, down_proj)

Top Tools and Frameworks

- • Unsloth: 2-3x speed gains for prototyping

- • Axolotl: YAML-based configuration, industry standard

- • Llama-Factory: User-friendly interface

- • Enterprise Platforms: Fireworks AI, Together AI

- • Open-Source: Hugging Face's PEFT library, Torchtune

Advanced PEFT: Beyond Vanilla LoRA

While QLoRA is the standard for memory efficiency, DoRA (Weight-Decomposed Low-Rank Adaptation) is the 2025 performance standard.

Technical Breakdown: LoRA vs. DoRA

LoRA

- • Decomposes weight updates (ΔW) into two low-rank matrices (A and B)

- • Freezes direction and magnitude together

- • Efficient but sometimes struggles to match full fine-tuning performance

DoRA (2025 Standard)

- • Separates the magnitude and direction of weights

- • Applies LoRA only to the direction component

- • Allows the magnitude to be trained more freely

- • Result: Achieves full fine-tuning performance with LoRA-like memory efficiency

- • No inference overhead relative to LoRA

Recommended Configuration (Llama 3.1 70B)

# Axolotl Config Snippet

adapter: qlora # or dora

lora_r: 64 # Rank: Higher (128) for complex reasoning

lora_alpha: 32 # Alpha: Usually r/2 or r

lora_dropout: 0.05

target_modules:

- q_proj

- k_proj

- v_proj

- o_proj

- gate_proj

- up_proj

- down_proj # Target ALL linear layers

gradient_accumulation_steps: 4

micro_batch_size: 2

learning_rate: 0.0002 # 2e-4 - Slightly higher for QLoRA

optimizer: adamw_8bit📋 Pragmatic Guidance

- • Small updates (style, tone, output format): rank 16-32, lower LR (5e-5-1e-4), 1-3 epochs

- • Moderate domain specialization (SQL, internal APIs): rank 32-64, LR 1-2e-4, 3-5 epochs

- • Heavy reasoning alignment/safety: rank 64-128, more steps with stronger regularization

RAG Implementation: Building Robust Retrieval-Augmented Generation Systems

Understanding the RAG Landscape

RAG has solidified its position as a cornerstone of enterprise AI, bridging the gap between static LLM knowledge and dynamic, proprietary data sources. By 2025, 60% of enterprise LLMs leverage RAG for tasks like customer support, legal analysis, and knowledge management.

RAG Paradigm Evolution

| Paradigm | Description | Best For |

|---|---|---|

| Naive RAG | Basic retrieval → augmentation → generation | Prototypes, low-stakes QA |

| Advanced RAG | Query rewriting, reranking, pre/post enhancements | Domain-specific apps (finance, healthcare) |

| Modular RAG | Swappable components, self-reflective agents | Agentic systems, multi-modal data |

Core Implementation Strategies

1. Data Ingestion and Preparation

Enterprise data is often unstructured (80% PDFs, memos, presentations), so ingestion must handle messiness while ensuring compliance.

Chunking Strategies

- • Avoid fixed-size chunks: Use semantic (sentence-based) or hierarchical (parent-child) methods

- • Late chunking with tools like Jina AI maintains relationships, boosting retrieval by 20-30%

- • For complex docs (tables, diagrams), preprocess with multi-modal LLMs

Metadata Enrichment

- • Tag chunks with categories, dates, or access levels

- • Example:

{"category": "legal", "pub_date": "2025-06-05"} - • Critical for multi-tenant systems

2. Indexing: Building Efficient Knowledge Stores

- • Managed Services: Pinecone or Weaviate for ease of use

- • On-Premises: Milvus or Qdrant for data sovereignty

- • Hybrid Indexing: Combine dense (semantic) + sparse (keyword) for acronyms and rare terms

- • Graph databases: Neo4j for relational data, enabling entity-linked retrieval

3. Retrieval: Precision Over Volume

⚠️ Critical Insight

Retrieval is 20% of the pipeline but 80% of accuracy challenges. Top-5 retrievals under 100ms; irrelevant docs can degrade generation by 30%.

- • Query Optimization: Rewrite queries with LLMs for expansion (synonyms) or decomposition

- • Hybrid and Reranking: Blend vector + keyword search, rerank with cross-encoders (e.g., Cohere Rerank)

- • Boosts precision by 15-25%

4. Frameworks and Tools

RAG Tooling (2025)

Evaluation and Monitoring

"You can't improve what you don't measure." Implement repeatable evals early.

- • Retrieval: Hit Rate, MRR (Mean Reciprocal Rank)

- • Generation: Faithfulness, Answer Relevance via RAGAS

- • End-to-End: BLEU/ROUGE, LLM-as-Judge

💡 Key Insight

In 2025, RAG isn't just a technique - it's the engine for trustworthy enterprise AI, delivering 80% of fine-tuning's value at 20% the cost.

Advanced Techniques: DoRA, Evol-Instruct, and Beyond

Data Engineering: The "Evol-Instruct" Paradigm

In 2025, manual labeling is too slow. The industry standard is Synthetic Data Evolution.

The "Golden Dataset" Recipe

Step 1: Seed Data (Human)

Collect 500–1,000 high-quality, human-written "Instruction → Response" pairs

Step 2: Synthetic Evolution (Machine)

Use a frontier model (GPT-4o, Claude 3.5 Sonnet) to "evolve" instructions:

- • Deepening: "Add constraints to this request"

- • Complicating: "Make the reasoning multi-step"

- • Concretizing: "Replace general concepts with specific edge cases"

Step 3: Filtering (Deduplication)

Run MinHash LSH (Locality Sensitive Hashing) to remove near-duplicates, preventing overfitting

Step 4: Format Standardization

Use ChatML format, the de-facto standard in 2025 for clearly defined role tokens

Implementation with Distilabel

from distilabel.pipeline import Pipeline

from distilabel.steps import LoadDataFromDicts

from distilabel.steps.tasks import EvolInstruct

from distilabel.llms import OpenAILLM

# 1. Your raw, messy internal data

raw_data = [

{"instruction": "How do I reset my password?", "response": "Go to settings..."},

{"instruction": "What is the Q3 revenue?", "response": "It was $4M..."}

]

with Pipeline(name="enterprise-evol-instruct") as pipeline:

# 2. Load Data

loader = LoadDataFromDicts(data=raw_data)

# 3. Evolve Instructions (The 2025 Magic)

evolver = EvolInstruct(

llm=OpenAILLM(model="gpt-4o"),

num_evolutions=2,

store_evolutions=True,

mutation_templates={

"constraint": "Add a specific constraint to this request...",

"reasoning": "Require multi-step reasoning to solve this..."

}

)

# 4. Connect steps

loader.connect(evolver)

# 5. Run Pipeline

distiset = pipeline.run()Why this matters: A model trained on evolved data outperforms a model trained on raw data by ~15-20% on reasoning benchmarks because it learns to handle complexity, not just recall facts.

Model Merging: The "TIES" Method

In 2025, you often don't train one monolithic model. Instead, train multiple specialized models (e.g., one for SQL, one for JSON, one for chatting) and merge them using MergeKit.

models:

- model: meta-llama/Meta-Llama-3.1-8B-Instruct

# Base model - no parameters needed

- model: ./my-sql-finetune-checkpoint

parameters:

density: 0.5 # Keep only 50% of changed weights

weight: 0.5

- model: ./my-json-finetune-checkpoint

parameters:

density: 0.5

weight: 0.5

merge_method: ties

base_model: meta-llama/Meta-Llama-3.1-8B-Instruct

parameters:

normalize: true

int8_mask: true

dtype: float16Benefits: Avoids catastrophic forgetting, combines specialized capabilities, single model deployment with multiple skills.

Safety and Alignment: Enterprise-Grade Security

Embed Safety and Alignment from the Start

Enterprises cannot afford ethical lapses or hallucinations, which remain key challenges in LLM integration.

Essential Safety Layers

Training Techniques

- • Constitutional AI: Train models to follow ethical principles

- • Self-Critique: Models evaluate their own outputs

- • Refusal Examples: Include 5-10% of data where the model politely refuses unsafe prompts

Inference Safeguards

- • Deploy Llama Guard 3 or NeMo Guardrails for real-time filtering

- • Multi-layer defense: data filters → refusal SFT → circuit-breaker → runtime heuristics → human escalation

Red-Teaming

- • Use tools like Garak or internal teams to simulate attacks

- • Address issues like catastrophic forgetting and alignment drift

Circuit Breakers & Representation-Level Safety

"Circuit breakers" are a new class of methods that manipulate internal activations to prevent harmful behavior, rather than only filtering outputs.

High-Level Pattern

- Identify Harmful Activation Regions using red-team prompts and gradient-based analysis

- Train Safety Steering Layers using LoRA/DoRA adapters to minimize harmful directions

- Integrate with PEFT Stack - safety adapters can coexist with capability adapters

Recent Results: Representation-steering fine-tuning (RepBend) can reduce jailbreak success by up to ~95% with minimal capability loss.

Defense-in-Depth Architecture

Multi-Layer Security Stack

Evaluation and Monitoring: Measuring Success

Implement Comprehensive Evaluation Pipelines

Measurement is critical - enterprises must quantify improvements to justify investments.

Evaluation Framework

General Benchmarks

- • MT-Bench: Multi-turn conversation evaluation

- • Arena-Hard: Capability assessment across diverse tasks

Domain-Specific

- • LegalBench: Legal reasoning tasks

- • MedQA: Medical question answering

- • Custom Metrics: Industry-specific tasks

Methods

- • Human Platforms: Argilla, Scale AI for high-quality annotations

- • Auto-Evals: G-Eval, Prometheus-v2 for scalable assessment

- • Safety Checks: HarmBench for adversarial evaluation

LLM-as-a-Judge: The 2025 Standard

Use a stronger model to grade the weaker, fine-tuned model.

The Implementation Pipeline

- Test Set: 200-500 "Golden Questions" that the model has never seen

- Inference: Run your fine-tuned model to generate answers

- Judge: Use GPT-4o or Prometheus-2 to score on Compliance, Faithfulness, Tone (1-5 scale)

- Metric: Track Win Rate vs. the Base Model

Key Metrics for 2025

- • Hallucination Rate: Measured via RAGAS scores

- • Token Efficiency: Did fine-tuning reduce tokens needed? (Often drops by 40%)

Observability: Production Monitoring

Key Metrics to Track

Tools: LangSmith or Phoenix for logging, WhyLabs for drift detection, TruLens for A/B testing.

Production Deployment: From Prototype to Scale

Production-Ready Deployment Stack

Recommended Tools

| Component | Tools | Purpose |

|---|---|---|

| Inference | vLLM, TensorRT-LLM | High-throughput serving |

| Orchestration | KServe, BentoML | Scaling and load balancing |

| Monitoring | Phoenix, LangSmith | Logging prompts/responses |

| Optimization | Quantization, A/B testing | Cost and performance |

vLLM & PagedAttention: The De-Facto Serving Stack

vLLM has become the default serving engine for many open-weight deployments thanks to:

- • PagedAttention: Manages KV cache like OS virtual memory

- • Continuous Batching: Dynamically merges new requests

- • Prefix Caching: Automatically reuses shared prefixes

- • Quantization Support: GPTQ, AWQ, INT4/INT8, FP8

python -m vllm.entrypoints.openai.api_server \

--model path/to/llama-3-8b-instruct \

--dtype bfloat16 \

--tensor-parallel-size 2 \

--max-model-len 8192 \

--api-key token123 \

--port 8000Speculative Decoding: Speeding Up Fine-Tuned Giants

Speculative decoding accelerates generation by letting a draft model propose multiple tokens and having a target model accept/reject them in chunks.

python3 -m vllm.entrypoints.openai.api_server \

--model /path/to/my-finetuned-70b-dora \

--tensor-parallel-size 4 \

--speculative-model meta-llama/Llama-3.2-1B-Instruct \

--num-speculative-tokens 5 \

--gpu-memory-utilization 0.90 \

--max-model-len 8192 \

--port 8000Hardware Sizing Guide (2025)

Hardware Requirements

| Model Size | Training | Serving |

|---|---|---|

| 8B Models | 1× A100/H100 or RTX 4090 with QLoRA | 1× 24-48GB GPU |

| 70B Models | 1-2× 80GB GPUs with QLoRA/DoRA | 2× H100 or 4× A100 80GB |

| 400B+ Models | 8× H100+ with FP8 | Teacher-only (distillation) |

Cost Optimization: Maximizing ROI

Fine-Tuning Costs Have Plummeted

💰 Typical Expenses

- • $1,000 one-time for 70B LoRA fine-tuning

- • $5,000/month hosting on 4× H100 GPUs for inference

The "Teacher-Student" Distillation: Biggest Cost Saver

The biggest cost saver in 2025 is Knowledge Distillation.

Typical 2025 Pipeline

- Choose a Strong Teacher: Proprietary model or massive open model (e.g., 400B)

- Generate Synthetic Labeled Data: Final answer, chain-of-thought, metadata

- Train an 8B-Class Student (QLoRA/DoRA): On teacher's outputs

- Evaluate: Prometheus 2 win-rate vs teacher and vs base 8B

- Deploy Student to Production

📊 ROI Example

- • 70B Model Inference: $0.005 / request

- • 8B Model Inference: $0.0002 / request

- • Savings: 25× reduction in recurring costs with 90-95% performance

Savings Strategies

Tiered Model Architecture

- • Use small models (7B) with RAG for 90% of queries (~$300/month)

- • Reserve large fine-tuned models for edge cases

- • Techniques like pruning and distillation further reduce overhead

Governance and Compliance: Enterprise Requirements

Regulatory Demands (GDPR, HIPAA)

Regulatory demands necessitate structured oversight.

Compliance Checklist

Multi-LLM Orchestration

Route queries across models for optimal performance, avoiding over-reliance on one.

- • Redundancy and fault tolerance

- • Cost optimization (route simple queries to cheaper models)

- • Specialization (different models for different tasks)

Implementation Roadmap: A Practical Guide

If you're building a serious enterprise LLM system in 2025, a sane roadmap looks like:

Phase 0 – Baseline

- • Strong base model via API

- • System prompt, few-shot examples

- • Manual evaluation with 50-100 real prompts

- • Goal: Establish baseline capabilities

Phase 1 – RAG + Caching

- • Stand up a simple vector DB; wire retrieval

- • Add prompt/context caching for static prefixes

- • Evaluate with RAGAS + LLM-as-a-Judge

- • Goal: Inject dynamic knowledge efficiently

Phase 2 – PEFT Fine-Tuning (DoRA/QLoRA)

- • Build golden dataset via Evol-Instruct + filtering

- • Run safety + refusal fine-tune first, then capability specialization

- • Integrate with vLLM serving

- • Goal: Achieve behavioral alignment

Phase 3 – Safety Hardening

- • Adversarial red-teaming framework

- • Circuit-breaker/representation-steering adapters

- • Logging + human review for sensitive channels

- • Goal: Enterprise-grade security

Phase 4 – Distillation & Optimization

- • Distill into an 8B student for 80-95% of traffic

- • Use speculative decoding + vLLM quantization

- • Continuously retrain/refresh with new data and attacks

- • Goal: Cost optimization at scale

Conclusion

In 2025, successful enterprise LLM fine-tuning and RAG implementation hinge on disciplined engineering: start lean, obsess over data and safety, evaluate rigorously, and deploy with observability. Organizations adopting these practices - treating fine-tuning and RAG as product disciplines rather than one-off experiments - unlock transformative value.

Key Takeaways

1. The Adaptation Spectrum is Your Friend: Don't default to fine-tuning. Evaluate the full range from prompt caching to RAG to PEFT.

2. Data Quality Trumps Quantity: 500-1,000 high-quality examples evolved with synthetic data often outperform 10,000 mediocre ones.

3. DoRA is the 2025 Standard: Move beyond vanilla LoRA to DoRA for better performance with similar efficiency.

4. Safety is Not Optional: Implement defense-in-depth with refusal training, circuit breakers, and continuous red-teaming.

5. RAG Delivers 80% of Fine-Tuning Value at 20% the Cost: For knowledge injection, RAG is often the better choice.

6. Distillation is the Ultimate Cost Saver: Teacher-student distillation can reduce inference costs by 25× while maintaining 90-95% performance.

The Path Forward

The landscape of enterprise LLM deployment evolves rapidly, with new techniques, tools, and best practices emerging monthly. However, the core principles outlined in this guide - strategic decision-making, data quality, safety-first design, rigorous evaluation, and production-ready deployment - remain the foundation of successful implementations.

Ready to Build Enterprise-Grade AI?

The organizations that succeed are those that treat LLM fine-tuning and RAG implementation as engineering disciplines - with proper processes, tooling, and governance - rather than experimental projects.

The best model is the one that solves your problem at the lowest cost with the highest reliability. Sometimes that's GPT-4 with RAG. Sometimes it's a fine-tuned Llama 3.1 8B. Sometimes it's a hybrid system routing queries across multiple models. The choice is yours - but now you have the knowledge to make it wisely.